The Operational Data Warehouse

Built to take action on what’s happening right now.

Trusted by teams to deliver fresh, correct results.

How do you operate with the freshest data available?

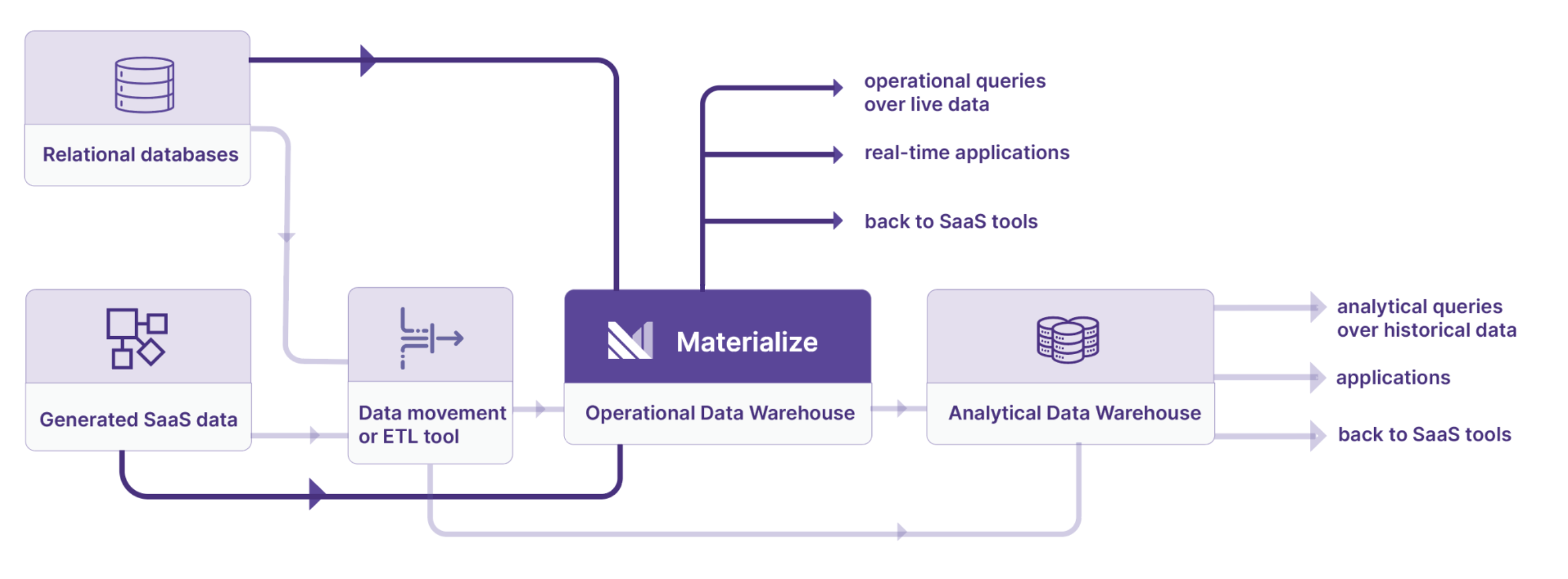

Materialize offers the best of both worlds, a data warehouse that combines real-time data with SQL support.

- Say goodbye to custom engineering. Materialize eliminates expensive, customized streaming solutions with an SQL-based data warehouse architecture.

- Data warehouses are built on a slow batch architecture and are expensive to use for time-sensitive use cases

Materialize delivers the best of both worlds, combining the ease of use of your data warehouse with the speed of streaming to enable you to operate with data now.

Serve any use case that needs fresh and consistent data

Move beyond analytics. Operationalize data to power your business. Materialize serves any use case that requires fresh and consistent data, including:

Automation and Alerting

→Eliminate manual work, remove delays, move faster by actioning automatically on your data.

Real-time Fraud Detection

→Monitor transactions as they occur and stop fraud in seconds.

User-Facing Apps and Features

→Dashboards and data products need to be reactive to up-to-the-minute changes in your business.

Real-Time Customer Data Platforms

→Value of personalization, recommendations, dynamic pricing increases as latency of data aggregations approaches zero

ML and AI Serving

→Online feature stores need updated data, operators need to monitor and react to changes fast.

Not sure which use case is most pertinent? The best place to start is by learning more about how Materialize works, or trying our guided tutorial yourself.

Built to deliver trust, scale, and ease

Materialize’s unique architecture helps you operate with data now by delivering data you can trust, at a scale that supports your business, without the heavy lifting of custom made solutions.

Trust

To trust your data for operational work, you need it to be responsive, fresh, and consistent. Materialize combines the best of streaming systems and data warehousing to deliver instantly updated results the moment your input data changes.

Scale

Your operational data warehouse should match your business in lockstep. Materialize can scale up through multiple cores and computers. It scales out to as many use cases as you’d like through storage and compute isolation. It even scales down to a single core if that’s all you need.

Ease

Materialize helps you take the heavy lifting out of near real-time data. You can interact with data using standard SQL. Connect to common data sources like Kafka and Postgres in minutes. Pay only for the compute and storage that you actually use.

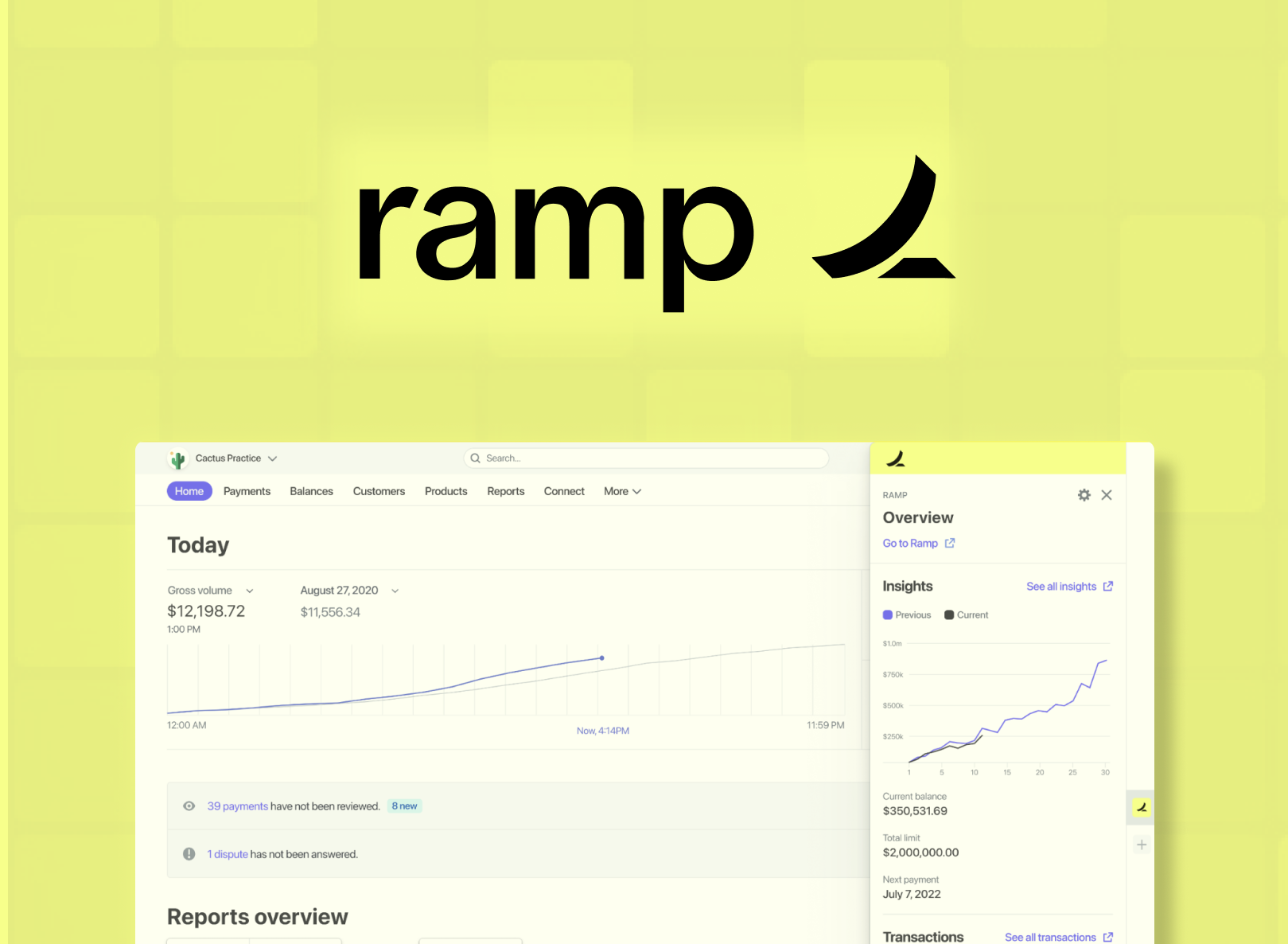

By moving SQL models for fraud detection from an analytics warehouse to Materialize, Ramp cut lag from hours to seconds, stopped 60% more fraud and reduced the infra costs by 10x.

Ryan Delgado Staff Software Engineer, Data Platform - Ramp

Try Materialize Free

Powered by a revolutionary engine.

Materialize's compute engine is built on Timely Dataflow and Differential Dataflow, incrementally maintaining results with sub-second latency.

Operationalize Your Data Stack

Materialize integrates seamlessly with your existing data stack, workflows, and teams. Power business processes with your data while keeping your data architecture intact.

Model SQL in dbt

Manage the entire operational lifecycle via familiar dbt workflows.

Query like it's PostgreSQL

Query Materialize from any driver or tool that connects to PostgreSQL

Manage in Terraform

Provision and manage connections, sources, and other database objects with Terraform.

Deliver real time with Kafka

Build your use case on top of data from Kafka, or sink out to Kafka