Build RAG systems that work with live, structured data

RAG systems excel at retrieving unstructured text but fall short when applications need real-time business context. Combine semantic search with continuously updated structured data to deliver AI experiences that reflect current reality—inventory levels, user preferences, market prices, and operational metrics that change moment by moment.

Real-time data drives better AI outcomes

Overcome common RAG limitations with structured data

Traditional RAG approaches struggle with dynamic business context. Address these fundamental limitations by integrating real-time structured data into your AI pipeline.

Use cases for structured data in RAG

Combine product descriptions from your knowledge base with real-time inventory, user preferences, and pricing data. Enable AI assistants to provide accurate delivery estimates, personalized recommendations, and current availability without stale cache misses.

Join market research documents with live portfolio positions, current market prices, and client risk profiles. Generate investment recommendations that reflect actual holdings and market conditions, not yesterday's closing prices.

Augment support documentation with real-time order status, account history, and system health metrics. Resolve issues faster with AI agents that understand both policies and current customer state.

Combine logistics documentation with live shipment tracking, inventory levels, and supplier performance data. Identify bottlenecks and optimize routes based on current conditions, not historical averages.

Layer regulatory guidelines with real-time transaction patterns, account behaviors, and risk scores. Identify suspicious activity using both institutional knowledge and current transactional context.

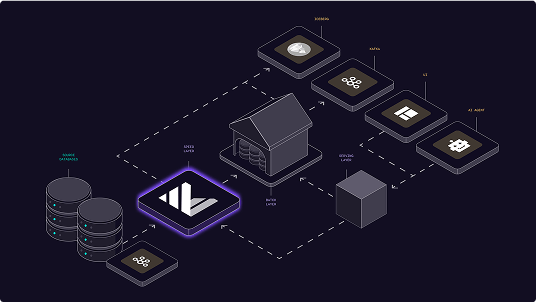

Architecture: Materialize as your structured data layer

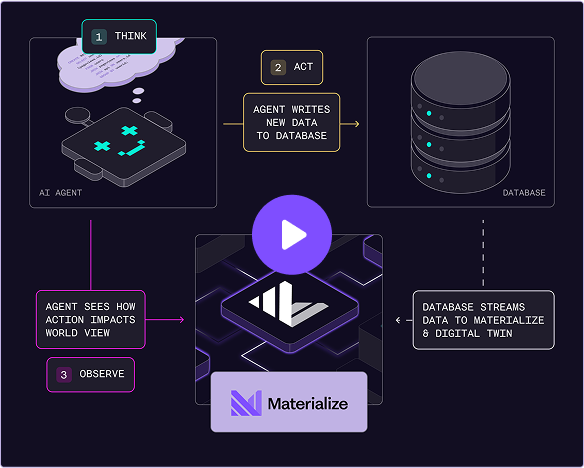

Materialize maintains continuously updated views of your operational data using SQL transformations. RAG retrieval combines semantic search results with real-time structured context through materialized views that update incrementally as source data changes. This architecture separates analytical workloads from production systems while ensuring AI responses reflect current business state.

Implementation: From SQL views to AI tools

Define business logic once as SQL materialized views. Materialize automatically exposes indexed views as callable tools through the Model Context Protocol (MCP). AI models can access real-time business context without custom API development or complex caching strategies. Views update incrementally and serve results with single-digit millisecond latency.

Implementation requirements and best practices

Successfully implementing structured data for RAG requires attention to data freshness, query performance, and system architecture. These considerations ensure your AI applications remain responsive and accurate.

Balance update frequency with query performance by choosing appropriate materialization strategies. Use incremental view maintenance for frequently changing data and batch updates for stable reference data. Monitor view refresh latency to ensure AI responses meet user expectations.

Design materialized views to handle schema changes gracefully. Use explicit column selection and type casting to maintain compatibility when source schemas evolve. Version view definitions to support rollback scenarios and A/B testing of new business logic.

AI models generate high query volumes with unpredictable patterns. Create indexes on commonly filtered columns and use query result caching for repeated patterns. Monitor query performance and optimize view definitions based on actual usage patterns.

Implement row-level security and column-level permissions in materialized views to ensure AI models only access authorized data. Use database roles to scope tool availability and audit query patterns to detect anomalous access attempts.

Ready to enhance your RAG system with structured data?

Start building AI applications that work with live business context. Materialize provides the real-time data layer your RAG systems need to deliver accurate, timely responses.